NASA Fleet: Otherworldly Robotics

←

About This Project

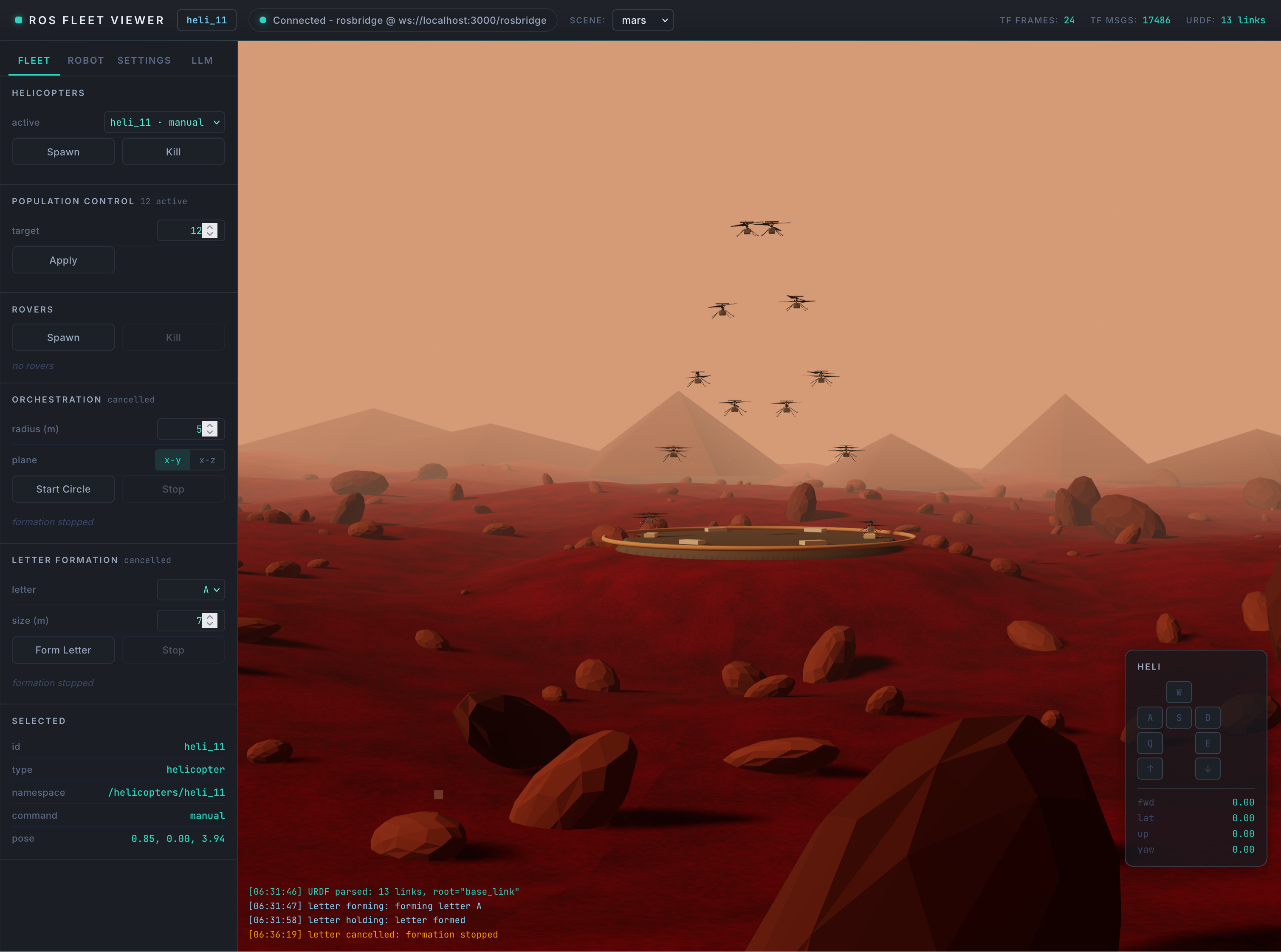

NASA Fleet is a browser-based robotics simulator that lets you spawn and pilot a fleet of NASA-inspired robots — including the Ingenuity Mars Helicopter and the Perseverance rover — across lifelike Mars, Earth, and city environments. Everything runs on a real ROS2 + Gazebo stack streamed to the browser, so you can drive, fly, and orchestrate robots without installing a thing.

I built this project to bridge the gap between professional robotics tooling and the web. Most ROS2 work happens in heavyweight Linux environments with RViz and Gazebo running locally; NASA Fleet pulls that whole stack into a containerized service and exposes it through a React frontend that talks to rosbridge over WebSockets.

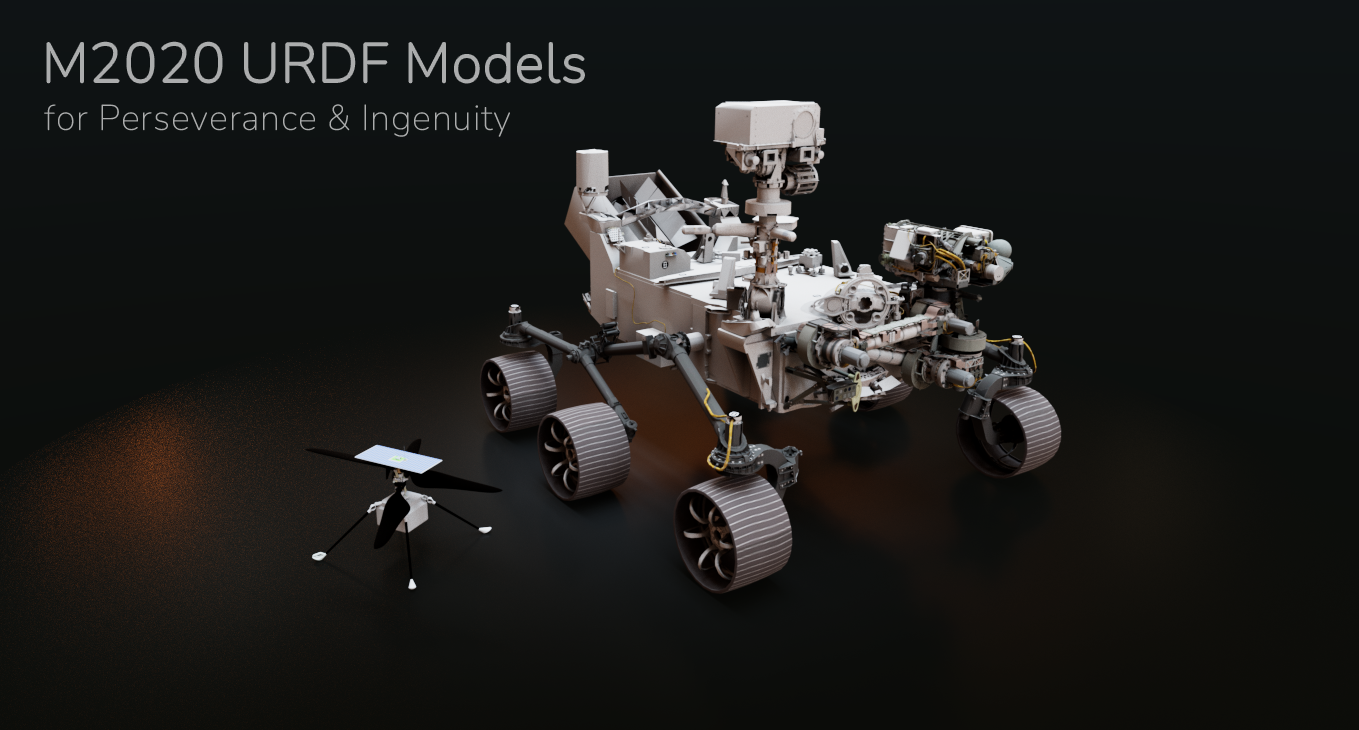

Robots in the Fleet

The fleet uses official URDF models from NASA JPL's m2020-urdf-models repository, so the Ingenuity helicopter and Perseverance rover you see in the simulator are the same descriptions JPL uses for the real mission hardware. Each robot is spawned as its own ROS2 node, with topics namespaced per instance so you can run and control many of them at once.

Key Features

🚁 Multi-Robot Fleet

Spawn and despawn Ingenuity helicopters and Perseverance rovers at runtime, each as its own ROS2 node

🌎 Lifelike Scenes

Detailed Mars, Earth, and city environments built as Gazebo SDF worlds at real-world scale

🎮 Browser Controls

WASD + Shift/Ctrl flight, Q/E yaw, and an autopilot mode with line, square, and return-to-pad patterns

📡 Live ROS Topics

Sidebar inspector for subscribed topics, TFs, and joint states, with a publisher panel for sending commands back

⚛️ React Frontend

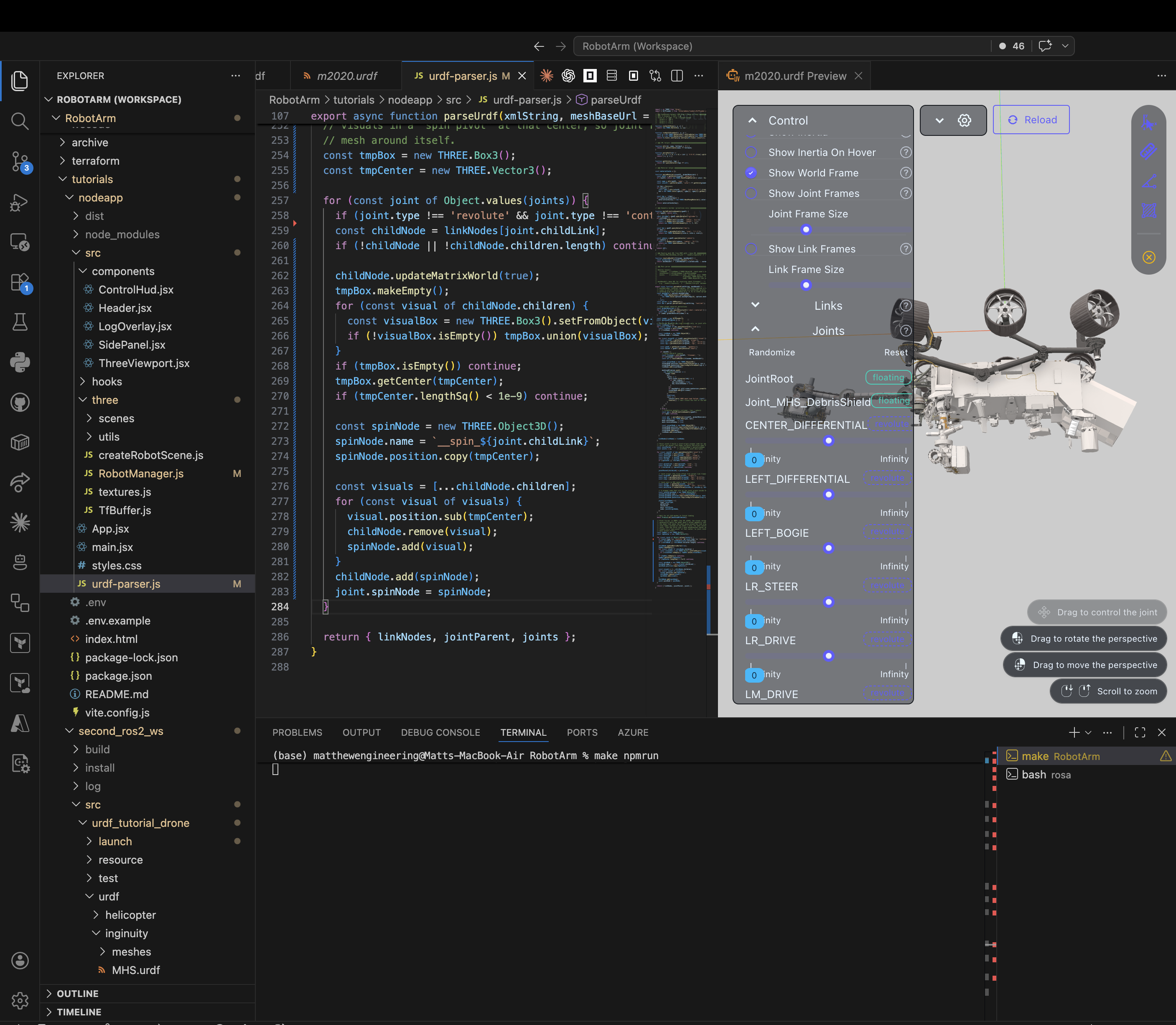

Componentized React app using roslibjs and three.js to render URDFs parsed live from /robot_description

☁️ Cloud Deployed

Single Docker container running ROS2 + rosbridge + Gazebo + Node, deployed to Azure Container Apps via Terraform

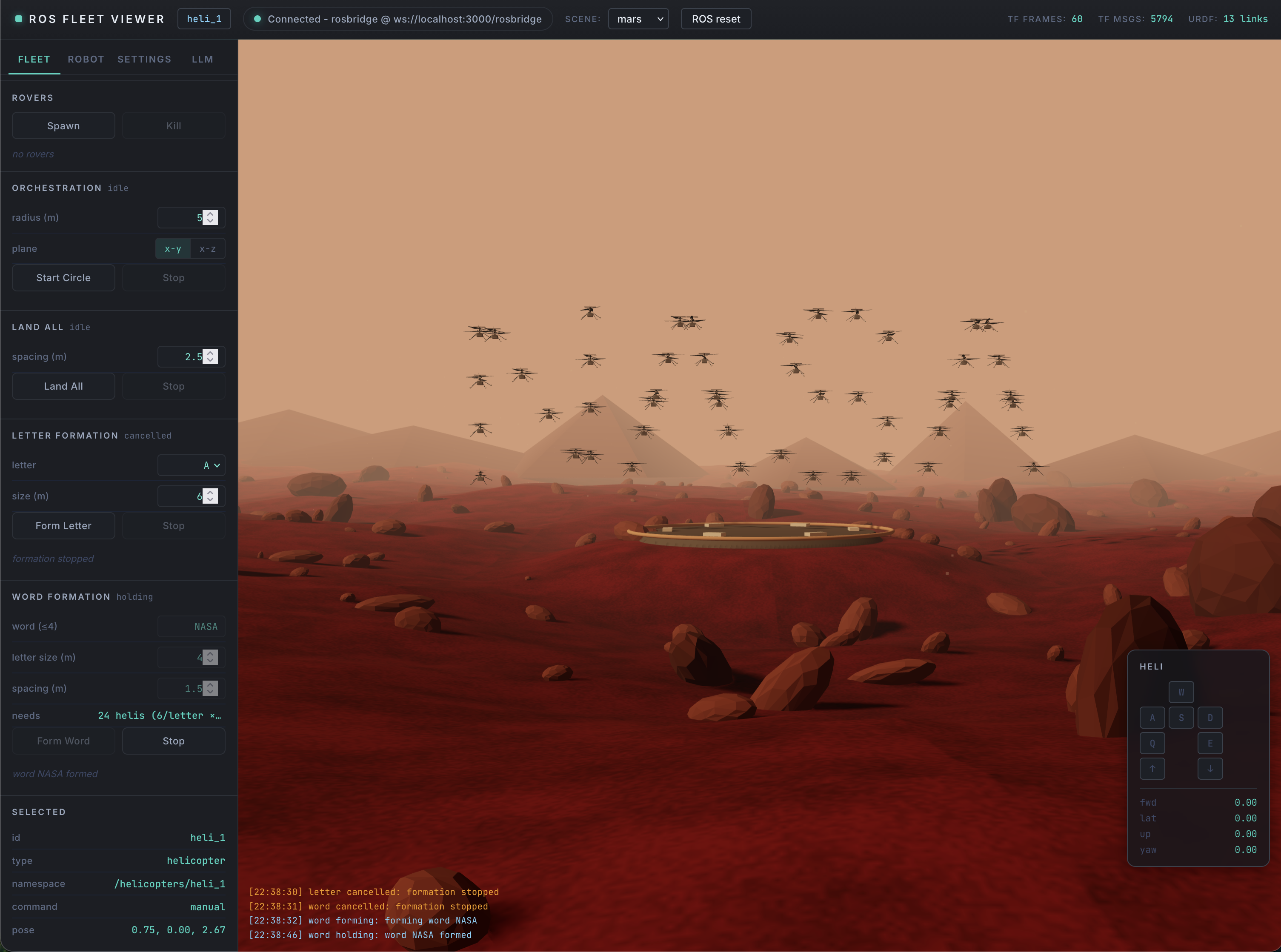

AI Orchestration with NASA JPL's ROSA

The most innovative part of this project isn't the simulator — it's the layer on top of it. NASA Fleet integrates NASA JPL's ROSA (the ROS Agent) to drive the fleet with large language models. ROSA is a real tool published by JPL for natural-language control of ROS systems, and here it acts as the "mission commander" sitting between a human operator and dozens of independent robot nodes.

Instead of clicking buttons to fly one helicopter at a time, you can issue high-level intent — "scout the ridge with three helicopters and have the rover meet at the landing pad" — and an LLM-backed agent decomposes it into per-robot ROS2 commands, publishing namespaced topics to each instance. That orchestration layer is where the ML work lives: prompt design for spatial reasoning, tool-calling schemas for ROS topics and services, and feedback loops that consume live telemetry (TFs, joint states, autopilot status) so the agent can react to what the robots are actually doing.

Inference is pluggable via Hugging Face inference providers, so the same orchestration stack can run against different model backends. The result is a small but real demonstration of multi-robot fleet management driven by LLMs — built on the exact tools NASA JPL publishes for their own mission software.

Technical Stack

Built on ROS2 Humble with Gazebo for physics and rendering, rosbridge_server for the WebSocket bridge, and a React + Vite frontend using roslibjs and three.js. The entire stack — ROS, Gazebo, Xvfb/noVNC, and the Node app — is packaged into a single Docker image and deployed to Azure Container Apps with infrastructure defined in Terraform.

The ROS2 nodes themselves are written in C++ — the same language used for production flight software. The only abstraction in this project is that I bypassed the low-level helicopter controls: incoming commands to the node currently return position changes directly rather than driving simulated motors. There's no reason these nodes couldn't be wired up to real production flight software written in F´ (F Prime),JSF, or another safety-critical C++ standard used for spacecraft and aerial vehicles — the topic interface stays the same, only the control loop underneath changes.

The AI layer is implemented on top of NASA JPL's ROSA (installed from PyPI per the developer documentation), with LLM inference routed through Hugging Face inference providers. URDF models for Ingenuity and Perseverance come from NASA JPL's m2020-urdf-models.